The United States has “a tight time window to adapt” to the “civilizational” challenge of AI, according to a former senior Pentagon thinker who’s joining Anthropic as a “strategist-in-residence.”

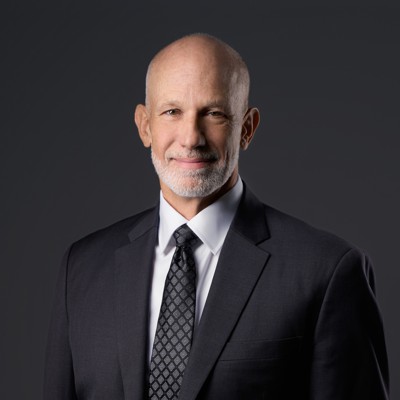

James Baker led the Defense Department’s Office of Net Assessment—often referred to as the “Pentagon’s Think Tank”—from 2015 to 2025, when it was temporarily closed by the Trump administration. At Anthropic—the AI company now amid a six-month withdrawal from federal service, as ordered by President Trump—Baker will to lead analysis of how AI is affecting U.S. institutions and competition with China, the company announced Friday.

As ONA director, Baker advised defense secretaries and national security advisors on the long-term effects of emerging technology on national security; he had earlier served on the Joint Staff and in other advisory roles.

For decades, ONA helped the U.S. military adapt to social, economic, environmental, and technological trends. The office was established in 1973 by Andrew Marshall, a policy strategist in the Nixon administration. Using a data-driven, “system-of-systems” approach, it sought to predict the interrelation and effects of trends from tech development to military affairs to labor. The office forecast how information technology would greatly increase the speed of warfare and the availability and precision of new weapons, including cyber and electromagnetic effects. These ideas prompted rethinking of force structure and underscored the need to accelerate acquisition reform.

In its final decade, ONA sought to understand implications of accelerating artificial intelligence, especially by Cold War institutions that Congress has been slow to change. A 2016 summary study, which formed the basis for an unclassified 2017 Belfer Center examination, identified a “Cambrian explosion” in robotics and artificial intelligence that would make warfare cheaper and faster, and reduce the advantage of expensive investments in “exquisite platforms” such as $90 million jets.

That trend is playing out today in Ukraine, which is using drones to decimate expensive Russian naval and air defense assets.

But in an interview, Baker said the national-security effects of AI stretch far beyond the military. Only by appreciating the vulnerability of all institutions, including the Defense Department, will society be able to adapt to the changes that are coming.

“We aren’t spending enough time thinking about the implications of recursive self-improvement,” he said, meaning intelligent systems that improve themselves far faster than their creators anticipate. “The greatest risk is the long-term viability of present institutions in war and in peace. That’s one of the questions I came to Anthropic to work on. It’s a multi-decade structural—even civilizational—problem.”

The Defense Department shuttered ONA last March, which a spokesperson said at the time was part a series of cuts to basic research not immediately application to weapons. The spokesperson said that the reorganization would help the Pentagon address “pressing national security challenges.” In October, the department reinstated a smaller version of ONA.

In March, the White House designated Anthropic a supply-chain risk after company executives declined to make their tools available for mass surveillance of U.S. citizens or to guide fully autonomous weapons.

In April, Anthropic announced that it would limit the release of a new AI tool dubbed Mythos to a handful of federal agencies and corporations to help find discover cyber vulnerabilities. The number of new vulnerabilities logged in the National Vulnerability Database nearly doubled this month.

Read the full article here

Leave a Reply